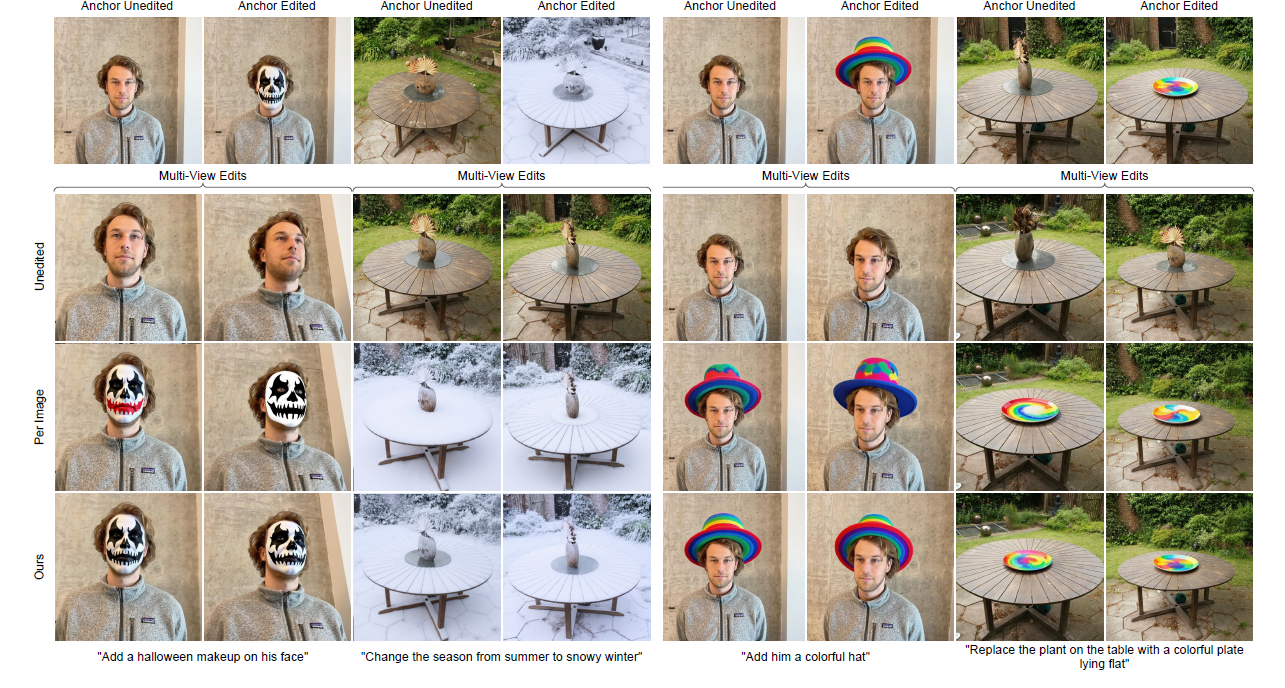

Multi-view Consistent Editing

Image editing methods applied independently to multi-view images often produce inconsistent edits across views. Our method improves multi-view consistency, and can be easily be combined with different 2D Editing methods. .

Edited Multi-View Images with FLUX.1

Method

Our method guides the diffusion or flow-matching editing process to improve multi-view consistency.

Given a set of input images, each view is edited sequentially by guiding the denoising process based on the previously edited images. The guidance is based on the assumption that matching points in the unedited images should be edited similarly. During the denoising process the noise estimate $\epsilon(z_t,t)$ is modified according to a consistency loss $\mathcal{L}_c$ resulting in multi-view consistent edits.

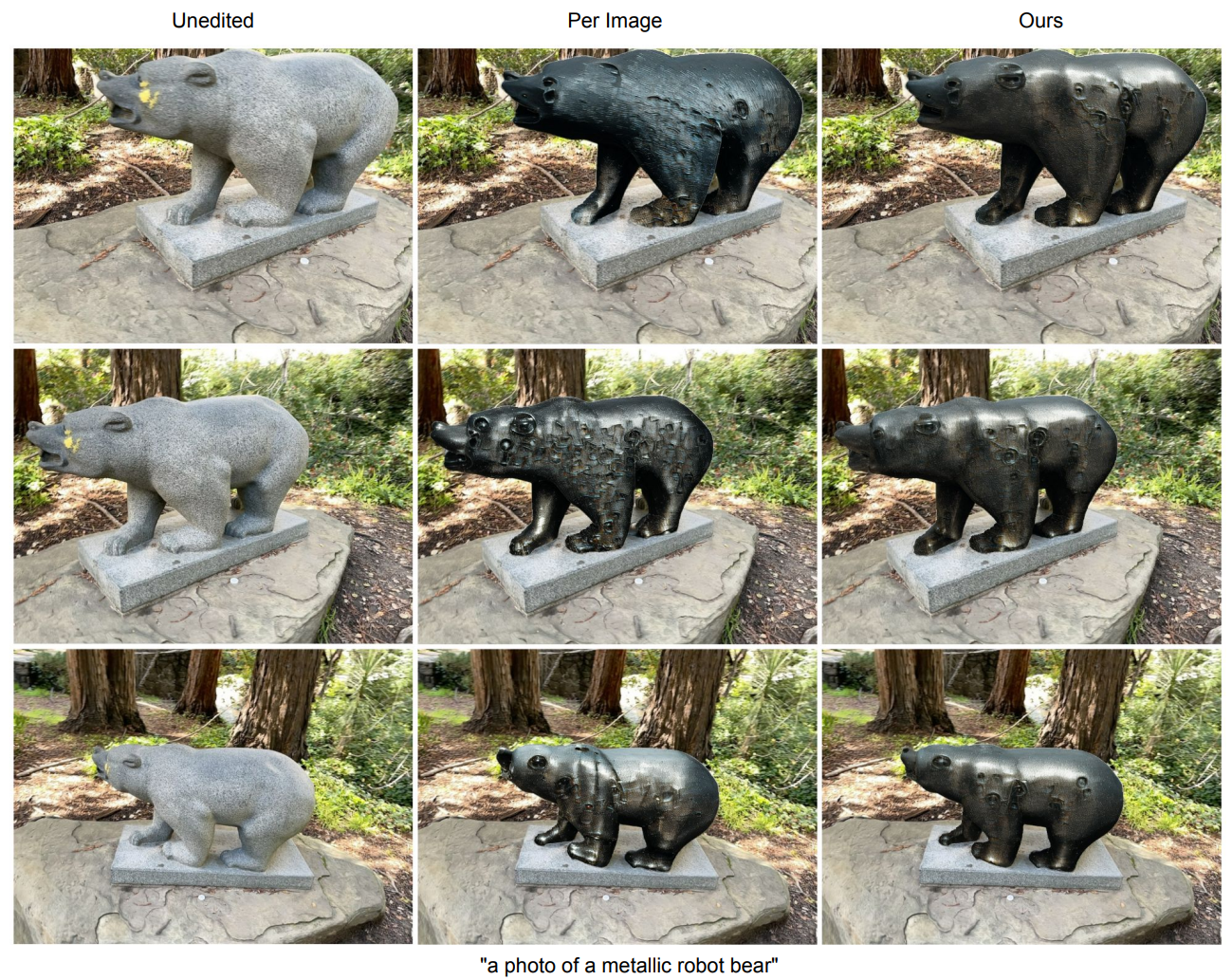

Sparse Editing

Our method can also be utilized for sparse-view editing.

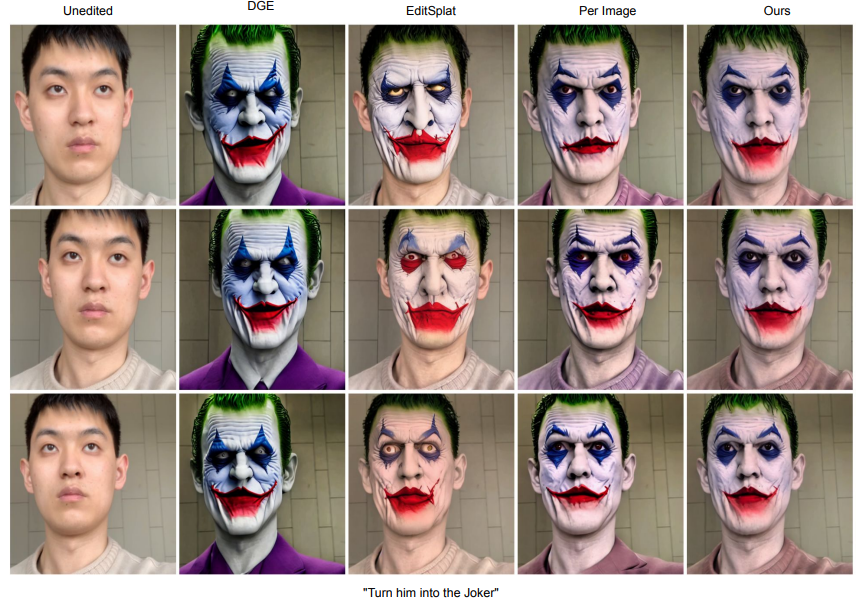

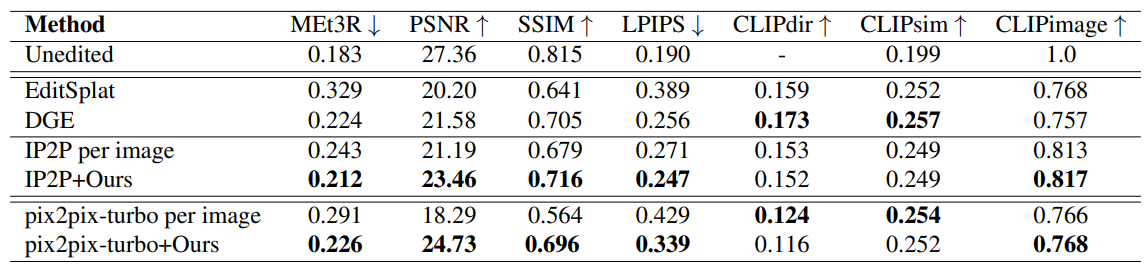

Renderings from edited 3DGS for all test scenes

We here show results for our method combined with both InstructPix2Pix and pix2pix-turbo.

BibTeX

If you use this work or find it helpful, please consider citing:

@misc{bengtson20263dconsistentmultivieweditingcorrespondence,

title={3D-Consistent Multi-View Editing by Correspondence Guidance},

author={Josef Bengtson and David Nilsson and Dong In Lee and Yaroslava Lochman and Fredrik Kahl},

year={2026},

eprint={2511.22228},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2511.22228},

}